Agentic Optimisation (AO):

What Comes After SEO and GEO in 2026

- The Machine-Readable Web for Wollongong Businesses -

Agentic Optimisation (AO) makes your website’s data directly discoverable and actionable by AI agents and autonomous workflows, going beyond SEO and GEO so machines can reliably find, parse, and use your information.

Most Wollongong businesses have just started adapting to AI search. There's already a next layer, and almost nobody is ready for it.

You've heard of SEO. You may have heard of GEO, Generative Engine Optimisation, structuring content so AI assistants like Claude, Peplexity, Gemini, and ChatGPT cite your business. But there's a third layer emerging right now that goes further than both: Agentic Optimisation (AO).

AO is the discipline of making your website's data discoverable and actionable by AI agents, autonomous systems that query, retrieve, and act on your information as part of larger automated workflows, without a human in the loop. The businesses that understand this now will have a structural advantage that compounds for years.

Technical crawl + structured data review + AI agent accessibility assessment. No obligation.

Why Search Has a New Layer Most Businesses Have Never Heard Of

The agentic AI market is projected to exceed $10.86 billion in 2026, and AI-to-AI query volume is accelerating at a pace most marketers haven't yet factored into their strategies. Fortune Business Insights, 20261

Meanwhile, BrightEdge research found that agentic AI crawl activity doubled in 2025, and the businesses being crawled most are those with clean, structured, fast-loading data. BrightEdge, 20252

In our audits of Illawarra websites, we consistently find the same problem: businesses that invested in beautiful design, persuasive copy, and even solid traditional SEO are largely invisible to AI agents because their content is locked inside JavaScript frameworks, missing structured data, or actively blocking AI crawlers in robots.txt.

This article explains what Agentic Optimisation actually is, why it matters for Wollongong businesses right now, and what practical steps you can take immediately, most of which build directly on technical SEO work you may already have in place.

The Three-Tier Search Evolution: SEO → GEO → AO

To understand Agentic Optimisation, it helps to see it as the third layer in a clear evolutionary sequence. Each layer didn't replace the previous one; it added a new audience you must now optimise for simultaneously. World Economic Forum, 20263

| Layer | Who Searches | Who Reads Result | Optimise For |

|---|---|---|---|

| Traditional SEO | Human | Human | Rankings, UX, readability |

| Generative SEO (GEO) | Human via AI (ChatGPT, Perplexity) | Human | Citations, authority, structured answers |

| Agentic Optimisation (AO) | AI agent (directed by a human) | AI system | Machine-parseable data, speed, APIs |

The critical distinction from AO is that no human ever sees your content during retrieval. An AI agent, say, a procurement bot comparing supplier pricing, a market research pipeline aggregating competitor data, or an AI travel planner assembling itineraries, queries your site, extracts what it needs, and feeds it into a larger automated workflow. Your content either makes it into that workflow or it doesn't. SEO.com, 20254. For Illawarra businesses in retail, professional services, or tourism, this is already happening; the question is whether you're being retrieved or ignored.

What AI Agents Actually Need From Your Website

When an AI agent queries your Wollongong business website as part of an automated workflow, it doesn't care about your brand colours, testimonial carousel, or hero video. It has a specific set of technical requirements, and if your site doesn't meet them, it moves on in milliseconds. Power Digital Media, 20265

- Speed above everything: Many AI systems operate with strict timeouts of 1–5 seconds. Content not returned within that window is simply dropped and never processed, no matter how good it is

- Server-rendered HTML or Markdown: Most AI crawlers do not execute JavaScript. If your content loads via a framework like React, Vue, or Angular, it is invisible to most agentic systems

- Unambiguous structured data: Schema.org JSON-LD, clear metadata, semantic HTML elements (

<article>,<section>,<main>), and a logical H1–H6 heading hierarchy tell AI agents exactly what your content is about - Key information at the top of the source: AI agents don't "scroll", they process what they encounter first in the HTML. Lead with your most important data: what you do, where you are, what you offer, and how to act

- Open, deliberate robots.txt access: Overly aggressive bot-blocking makes you completely invisible to agentic workflows. A deliberate access policy, allowing reputable AI crawlers while managing scraper bots, is now a competitive requirement

In our Illawarra client audits, we regularly find all five of these failing simultaneously on sites that rank perfectly well in traditional Google search. AO readiness and traditional SEO health are not the same thing, and the gap is widening.

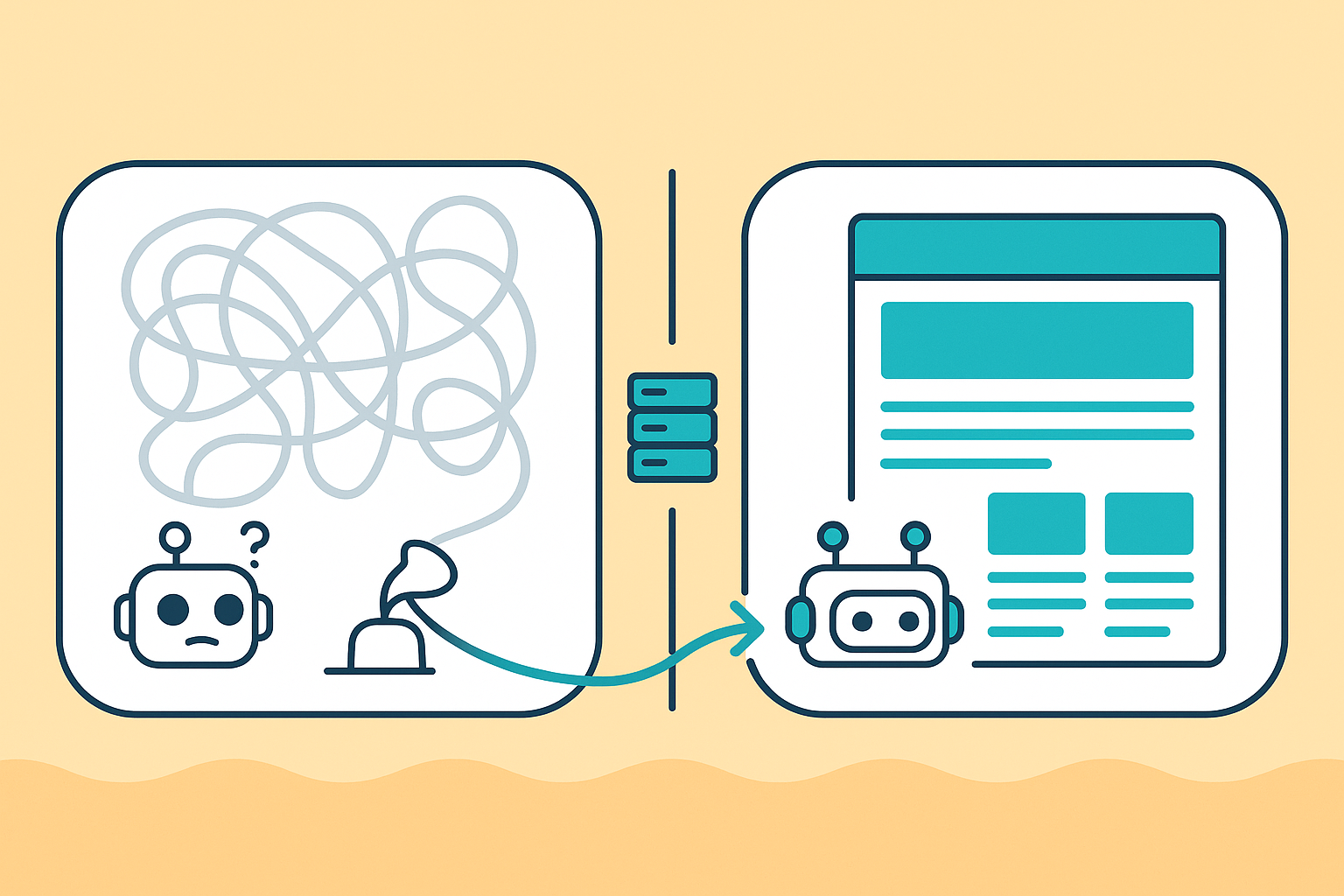

The JavaScript Invisibility Problem

This is the most urgent and underappreciated technical risk in 2026. The majority of modern websites, including many built on popular platforms used by Wollongong SMBs, rely on client-side JavaScript rendering to display content. The HTML page that arrives in response to an AI crawler's request is essentially an empty shell; the actual content only appears after JavaScript executes in a browser environment. ClickRank, 20266

Most AI agents, including the crawlers behind ChatGPT, Perplexity, Claude, and emerging agentic workflow platforms, do not execute JavaScript. They receive the empty shell and see nothing useful. Spruik, 20267 Your entire product catalogue, service descriptions, FAQs, and pricing are invisible.

- Built on React, Next.js (CSR mode), Vue, Angular, or similar JS frameworks without SSR configured

- Content loaded via AJAX or API calls after initial page load

- Tabs, accordions, or "load more" buttons that reveal content dynamically

- Product or service data pulled from a headless CMS via client-side API calls

- Any page where "View Source" shows significantly less content than what you see in the browser

The fix: Server-Side Rendering (SSR) or static site generation (SSG) ensures content is present in the initial HTML response. For Joomla and WordPress sites common in the Illawarra, this is typically not an issue, but for businesses that have recently rebuilt on modern frameworks, an urgent SSR audit is warranted. Pre-rendering services are also a practical interim solution. LinkedIn / Prerender, 20258

Structured Data: From Nice-to-Have to Non-Negotiable

Schema.org markup has been a best-practice recommendation for years. In the AO era, it becomes the literal language AI agents use to understand who you are, what you offer, and whether you're relevant to their query. Digital Applied, 20269 Without it, AI agents make educated guesses, or skip you entirely in favour of a competitor whose data is unambiguous.

For Wollongong and Illawarra businesses, the most impactful schema types to implement immediately are:

- LocalBusiness / ProfessionalService: Name, address, phone, opening hours, service area, the foundational layer for any Illawarra business wanting local agentic discoverability

- FAQPage: Directly answers the questions AI agents are most likely to be querying on your behalf, and the format AI systems prefer for extraction

- Product / Offer: Price, availability, condition, GTIN, essential for any Wollongong e-commerce or retail business competing in agentic commerce workflows

- Article / BlogPosting: Author, date published, topic entity, signals E-E-A-T to both AI agents and Google's own systems

- BreadcrumbList + SiteNavigationElement: Helps AI agents understand your site architecture without needing to crawl every page

Beyond JSON-LD, semantic HTML matters just as much. Using <article>, <section>, <header>, <nav>, and <main> Elements correctly gives AI agents a structural context that <div> soup simply cannot provide. In our Illawarra audits, non-semantic HTML is one of the most common and most fixable AO gaps we find. Technical SEO services by Creative Orbit - Wollongong

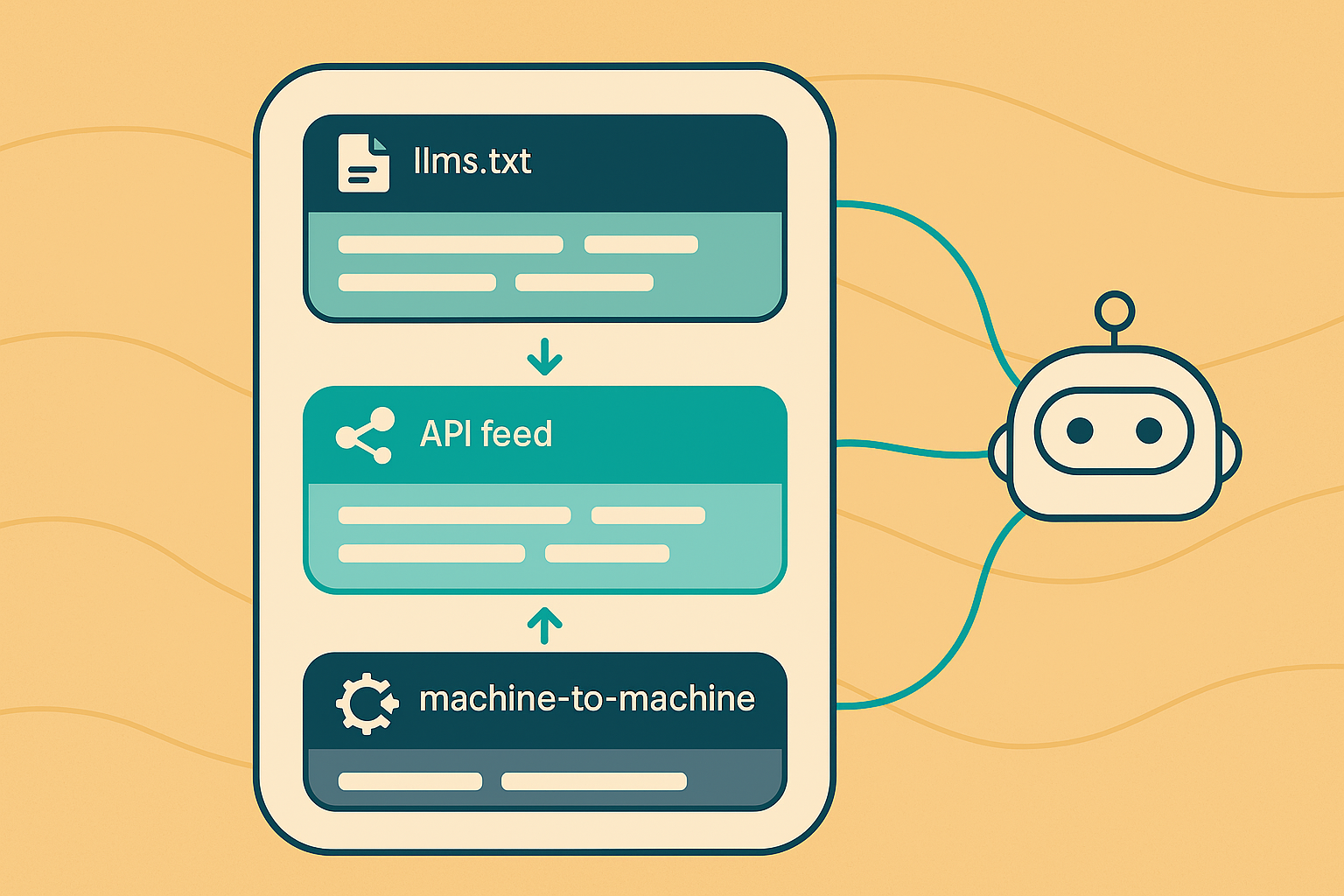

The Machine-to-Machine Tier: APIs, Feeds & llms.txt

Beyond optimising crawlable pages, the highest tier of AO involves giving AI agents direct, structured access to your data, bypassing HTML entirely. This is where the gap between early adopters and everyone else will become most pronounced over the next 12–18 months. Cubitrek, 202610

- Public-facing APIs: Allow AI agents to query your product data, service catalogue, knowledge base, or inventory in real-time, structured formats (JSON, XML). A Wollongong retailer with a queryable product API is retrievable by AI procurement agents; one without is not

- RSS / Atom feeds: Let agents subscribe to content updates programmatically, still highly effective and widely supported by AI crawlers. If your Joomla or WordPress site isn't publishing a feed, this is a quick win

- llms.txt files: An emerging standard (analogous to robots.txt) that explicitly tells AI language model systems what content is available, how to access it, and what the most important pages are. Adoption is growing; 4,500+ websites had implemented llms.txt by early 2026. ALLMO, 202611

Honest caveat on llms.txt: As of mid-2025, most major AI crawlers did not actively read llms.txt files, and compliance was inconsistent. Reddit / SEO Community, 202512. However, the standard is gaining rapid traction; implementing it now costs nothing and positions you ahead of the wider adoption. Think of it as planting a flag rather than flipping a switch.

Model Context Protocol: The Emerging Standard for Direct AI Integration

Model Context Protocol (MCP) is the most advanced layer of AO currently in active development. Introduced by Anthropic and rapidly adopted across the AI ecosystem, MCP is an open protocol that allows AI agents to interface with a website's data and capabilities as a structured tool, not just a page to crawl. Red Hat Developer, 202613

Think of it this way: traditional crawling is like an AI agent reading your brochure. MCP is like giving the AI agent a direct phone line to your systems; it can ask specific questions, retrieve live data, and trigger actions, all within a structured, permissioned framework. Microsoft Dynamics 365 is already implementing MCP server connections to integrate with enterprise AI agents. Microsoft, 202614

- Now (2026): MCP is primarily relevant for larger platforms, SaaS products, and businesses with existing APIs. For most Illawarra SMBs, awareness and monitoring is the right posture, not immediate implementation

- 12 months (2027): As MCP-compatible AI agent platforms proliferate, businesses with MCP-enabled data layers will be directly queryable by AI assistants across tools like Claude, ChatGPT, and enterprise automation platforms

- The preparation work starts now: Clean data architecture, well-documented APIs, and structured service/product data are the prerequisites, exactly what good technical SEO delivers today

The robots.txt Risk Most Wollongong Brands Are Ignoring

Here's a scenario playing out across Australian businesses right now: a well-meaning developer adds aggressive bot-blocking to robots.txt to reduce server load or protect content, and inadvertently makes the entire site invisible to GPTBot, ClaudeBot, PerplexityBot, and every other AI agent crawler operating today. Software Seni, 202615

The decision of whether to allow or block AI crawlers is now a strategic marketing decision, not a technical housekeeping task. Blocking all bots protects your content from being used for AI training, but it also removes you from AI-generated answers, agentic workflows, and every form of GEO and AO visibility simultaneously.

- Allow reputable AI crawler agents explicitly: GPTBot (OpenAI), ClaudeBot (Anthropic), PerplexityBot, Google-Extended, Meta-ExternalAgent

- Disallow known scrapers and low-quality bots that provide no discoverability value

- Review your current robots.txt immediately, a wildcard

Disallow: /forUser-agent: *blocks everything, including AI agents - Add a Crawl-delay directive for high-volume bots if server load is a concern; this preserves access without performance impact

In our Illawarra technical audits, robots.txt misconfiguration affecting AI crawlers is one of the most commonly found and easiest-to-fix issues. It takes five minutes to check and five minutes to fix. Technical SEO Audit services

What Agentic Optimisation Means for Wollongong & Illawarra Businesses

The businesses that win in agentic search are those whose data is structured, fast, and machine-accessible. That's not a new category of work; it maps almost exactly onto the technical SEO and schema markup foundations we've been building for Illawarra clients for years. The difference is urgency and framing: what was previously best practice is now the baseline for a new form of visibility that's growing faster than traditional search ever did. Security Brief AU, 202516

For a Wollongong professional services firm, this means your service descriptions, team credentials, and FAQs need to be in clean, structured data, not buried in a JavaScript-rendered page. For a Shellharbour retailer, your product catalogue needs to be queryable. For a Kiama tourism operator, your availability, pricing, and location data need to be in machine-readable formats that an AI travel agent can retrieve and act on in real time.

The agentic AI crawl activity doubling in 2025 is not a forecast; it already happened. BrightEdge, 20252 The queries are happening now. The question for every Illawarra business is simply: are you in the answer or not? Googles AI Ecosystem - Creative Orbit - Illawarra & Paid Search Pivots Google AI Overviews

Frequently Asked Questions

What is Agentic Optimisation (AO) and how is it different from SEO?

Traditional SEO optimises your website so humans can find it via search engines. Agentic Optimisation (AO) optimises your website's data so AI agents, autonomous systems running automated workflows, can find, retrieve, and act on your information without a human in the loop. AO focuses on machine-parseable speed, structured data, server-rendered HTML, and direct data-access methods such as APIs and llms.txt files.

What is GEO (Generative Engine Optimisation) and how does it differ from AO?

GEO optimises your content so it is cited by AI assistants like ChatGPT and Perplexity when humans ask them questions, but humans still read the results. AO goes further: it optimises for AI agents that autonomously retrieve and act on your data as part of a larger automated pipeline, with no human reading the response. All three layers, SEO, GEO, and AO, are now required simultaneously.

Is my Wollongong website invisible to AI agents if it uses JavaScript?

Potentially yes. Most AI crawlers, including those behind ChatGPT, Perplexity, and Claude, do not execute JavaScript. If your site uses client-side rendering (React, Vue, Angular without SSR), your content may appear to AI agents as a near-empty HTML shell. The fix is Server-Side Rendering (SSR), static site generation, or a pre-rendering service. Joomla and standard WordPress sites are typically not affected.

What is llms.txt, and should my business implement it?

llms.txt is an emerging file standard, similar to robots.txt, that explicitly tells AI language model systems what content your site has and how to access it. Over 4,500 websites had implemented it by early 2026. While major AI crawlers don't universally support it yet, implementation is free and quick, and it positions you ahead of the wider adoption curve. For most Wollongong businesses, adding llms.txt is a low-effort, forward-looking quick win.

What is Model Context Protocol (MCP), and do I need it now?

MCP is an open protocol that lets AI agents interface directly with your site's data and capabilities as a structured tool, not just a page to crawl. For most Wollongong SMBs, full MCP implementation is a priority for 2027. In 2026, the right move is to build the prerequisite foundations: clean, structured data, well-documented service/product information, and, ideally, a public API for queryable data. Creative Orbit can assess your MCP readiness as part of a technical audit.

How do I get started with Agentic Optimisation for my Illawarra business?

Start with a technical audit covering five areas: (1) page speed and server response time, (2) JavaScript rendering, confirm content is in initial HTML, (3) Schema.org structured data coverage, (4) robots.txt AI crawler access policy, and (5) llms.txt implementation. Most Illawarra businesses can address all five within a single 90-day project. Creative Orbit offers a free AO Readiness Audit covering all five areas, with no obligation.

Is Your Wollongong Website Ready for Agentic Search?

Most Illawarra businesses aren't yet. A free AO Readiness Audit covers your page speed, JavaScript rendering risk, structured data coverage, robots.txt access policy, and llms.txt readiness. You'll leave with a plain-English report and a prioritised 90-day action list, regardless of whether you engage further.

Based in Keiraville, serving Wollongong, Illawarra, Shoalhaven & Regional NSW. 15+ years of technical SEO experience.

Sources & References

- Fortune Business Insights, Agentic AI Market Size & Forecast Report, 2026

- BrightEdge, Agentic AI Activity Doubles: Adapt Your SEO Strategy Now, 2025

- World Economic Forum, How Brands Are Repositioning for Agentic Engine Optimisation, 2026

- SEO.com, What is Agentic SEO and Is It the Future of SEO?, 2025

- Power Digital Media, Agentic SEO & the Machine-Readable Web, 2026

- ClickRank, Can AI and LLMs Render JavaScript? SEO Reality Check 2026, 2026

- Spruik, The JavaScript SEO Crisis: Why Your Website Is Invisible to AI Search, 2026

- LinkedIn / Prerender, How to Make Your Site Visible to AI Crawlers, 2025

- Digital Applied, Schema Markup AI Generation: Complete Guide 2026, 2026

- Cubitrek, API-First SEO: Preparing Your Data for Autonomous Agents, 2026

- ALLMO, LLMs.txt for AI Search Report, 2026

- Reddit SEO Community, LLMs.txt, Why Almost Every AI Crawler Ignores It, 2025

- Red Hat Developer, Building Effective AI Agents with Model Context Protocol, 2026

- Microsoft, Connect AI Agents Using Model Context Protocol Server, 2026

- Software Seni, How to Govern AI Crawler Access to Your Website in 2026, 2026

- Security Brief AU, Agentic AI to Transform APJ Businesses by 2026, 2025